@background404/node-red-contrib-llm-plugin 0.5.0

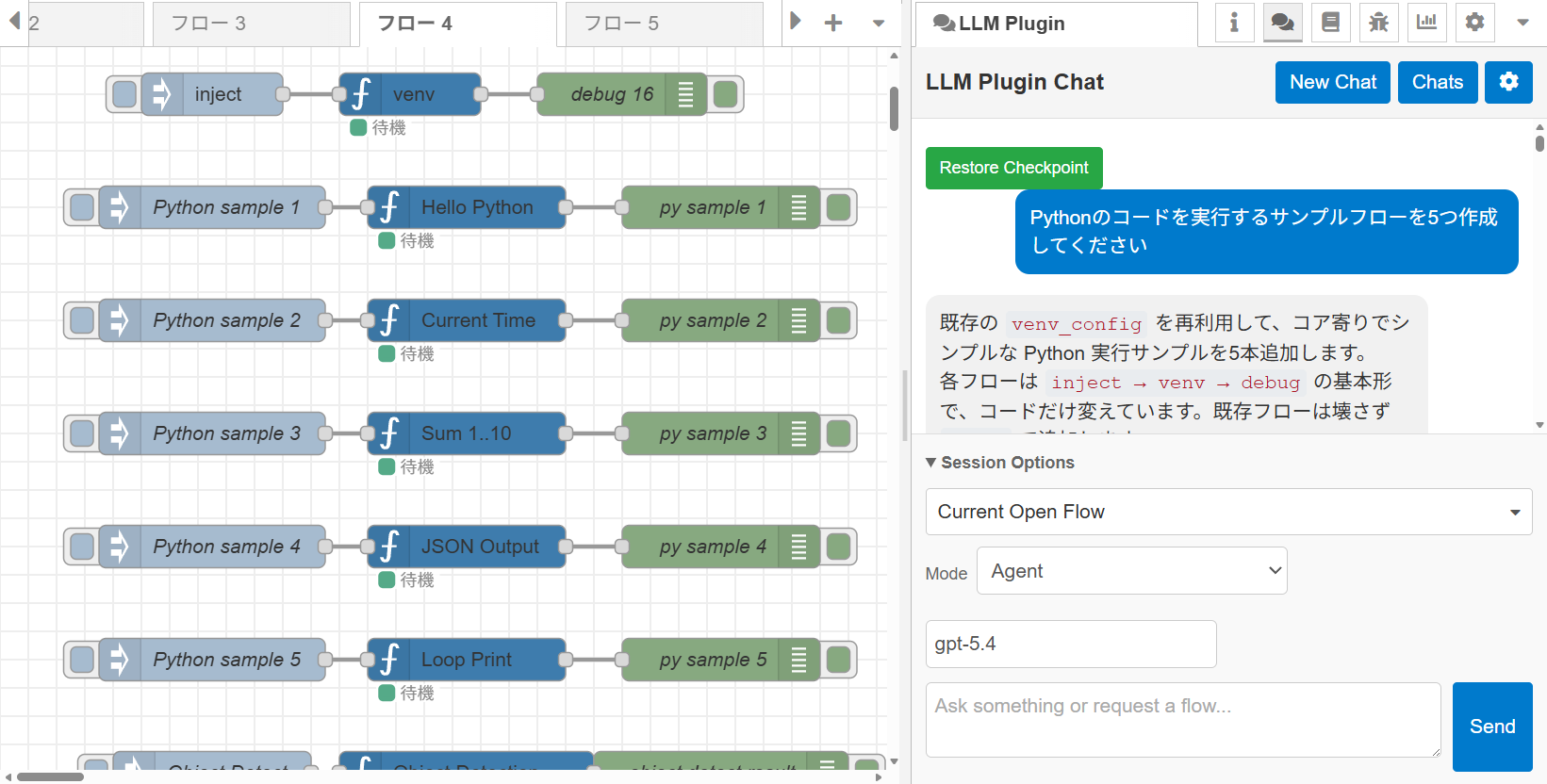

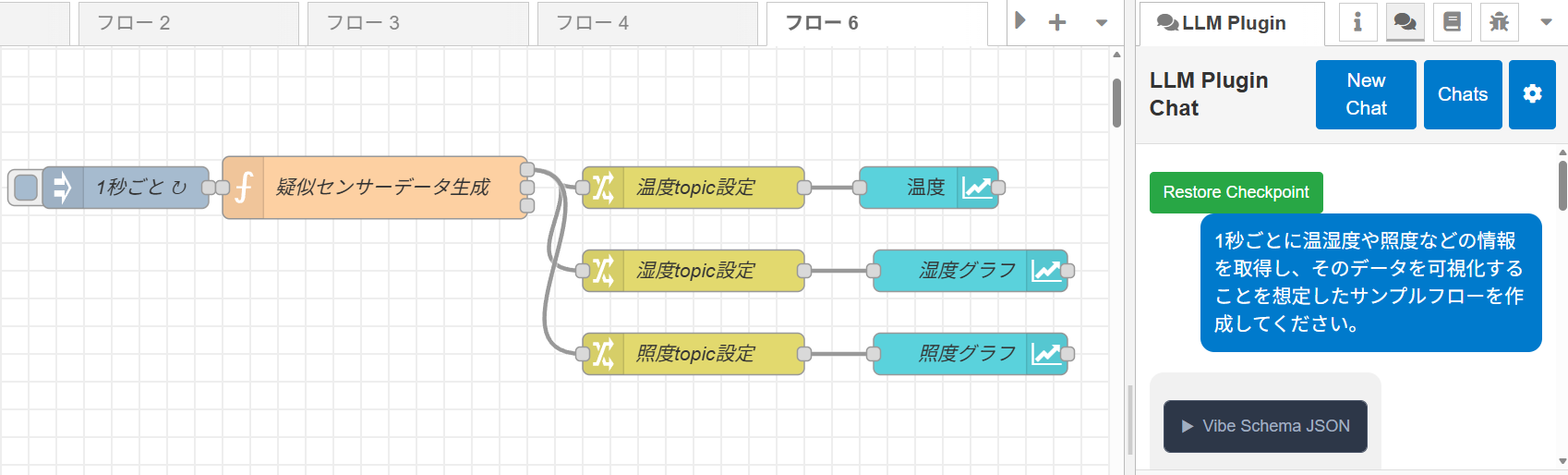

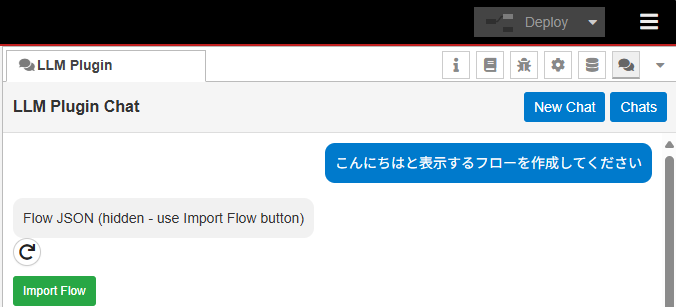

Node-RED sidebar plugin for chatting with LLMs (Ollama / OpenAI / Custom OpenAI-compatible endpoints), generating flows, and importing results

LLM Plugin for Node-RED

LLM Plugin is a Node-RED sidebar extension for chatting with LLMs, generating/modifying flows, and importing results into the active tab.

Demos

Click the image below to watch the video:

Install

Add from "Manage palette" or

npm install @background404/node-red-contrib-llm-plugin

Restart Node-RED after install.

Quick Start

- Open the LLM Plugin sidebar.

- Configure provider in Settings:

- Ollama: set URL (default

http://localhost:11434) - OpenAI: set API key

- Custom (OpenAI-compatible): set Base URL (e.g.

http://localhost:8080/v1) and, if required, an API key. Use for llama.cpp, LM Studio, vLLM, LocalAI, or any other server speaking the OpenAI chat-completions API.

- Pick which flow tabs to include via the flow selector (defaults to Current Open Flow; check additional tabs in the dropdown to send them too).

- Select Agent mode for auto-apply, or Ask mode for manual import.

- Enter model and prompt.

- Click Send to generate and/or apply the flow.

Recommended Usage

It is highly recommended to add custom or non-core nodes to your flow before passing them to the LLM. Since the LLM does not inherently know the required properties of custom nodes, keeping a small sample flow in the active tab ensures it is sent as the Current Open Flow. The model will then follow real node/property patterns from that sample instead of relying on fixed per-node prompt rules.

Features

- Chat history: conversations are persisted on the server and can be loaded, deleted, or continued across sessions.

- Checkpoint / Restore: a snapshot of the flow is taken immediately before each import, and a per-message Restore button rewinds the workspace to that pre-edit state.

- Custom system prompt: add persistent instructions (preferred node types, coding style, language) via Settings.

Flow Import

- Supports Vibe Schema and raw Node-RED JSON.

- Accepts mixed response text + JSON (with or without code fences).

- Preserves robust parsing when function code contains comment tokens in JSON strings.

- Agent mode seamlessly handles connection updates and LLM-driven deletions.

- All schema applies use merge semantics: listed nodes are added or updated, aliases mapped to

nullare deleted, anything not mentioned is left alone.

More Docs

- Implementation guide: src/README.md

- Prompt template: src/prompt_system.txt

Security Notice

API keys (OpenAI and Custom-endpoint) are stored encrypted in <userDir>/llm-plugin/credentials.json using AES-256-CTR with your Node-RED credentialSecret (the same algorithm Node-RED uses for flows_cred.json). Non-secret settings stay in RED.settings. The plugin also masks keys in the UI and redacts them from logs.

The encrypted file is only as safe as your credentialSecret. When sharing your Node-RED user directory (Git, backups, environment exports), keep credentials.json, flows_cred.json, .config.*.json, and your settings.js out of the share — and never publish your credentialSecret. Older installs that stored the key in plaintext are migrated to the encrypted file automatically on first boot.

Notes

- This plugin is under active development.

- Model output quality varies by model and prompt.

- Cloud / sandboxed Node-RED hosts (e.g. enebular): chat history

and flow checkpoints are persisted to

<userDir>/llm-plugin/when that location is writable, else to the OS temp directory, else in-memory only. The plugin never writes to its own install dir, so it loads cleanly on read-only plugin filesystems.

Links

Please report issues at: GitHub Issues

Node-RED API Reference

My article: 『Node-REDのプラグインを開発してみる その2(LLM Plugin v0.4.0)』